However, the fashion attribute tasks on iMaterialist all share the same domain and only differ in their labels. INaturalist tasks from iMaterialist tasks due to differences in the two problemĭomains. (Right) T-SNE visualization of the domain embeddings (using mean feature activations) for the same tasks. For example, the tasks of jeans (clothing type), denim (material) and ripped (style) recognition are close in the task embedding. Notice that some tasks of similar type (such as color attributes) cluster together but attributes of different task types may also mix when the underlying visual semantics are correlated. iMaterialist is well separated from iNaturalist, as it entails very different tasks (clothing attributes). Notice that the bird classification tasks extracted from CUB-200 embed near the bird classification task from iNaturalist, even though the original datasets are different. Colors indicate ground-truth grouping of tasks based on taxonomic or semantic types. (Left) T-SNE visualization of the embedding of tasks extracted from the iNaturalist, CUB-200, iMaterialist datasets.

Figure 1: Task embedding across a large library of tasks (best seen magnified).

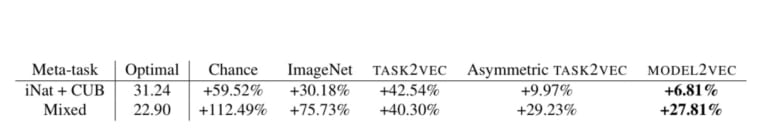

Selecting a feature extractor with task embedding obtains a performance close to the best available feature extractor, while costing substantially less than exhaustively training and evaluating on all available feature extractors. We present a simple meta-learning framework for learning a metric on embeddings that is capable of predicting which feature extractors will perform well. We also demonstrate the practical value of this framework for the meta-task of selecting a pre-trained feature extractor for a new task. We demonstrate that this embedding is capable of predicting task similarities that match our intuition about semantic and taxonomic relations between different visual tasks ( e.g., tasks based on classifying different types of plants are similar). This provides a fixed-dimensional embedding of the task that is independent of details such as the number of classes and does not require any understanding of the class label semantics. Given a dataset with ground-truth labels and a loss function defined over those labels, we process images through a “probe network” and compute an embedding based on estimates of the Fisher information matrix associated with the probe network parameters. The challenge in this assignment is to catalog the range of proposed ideas to address this key problem, identify which are most promising, and potentially implement some of them to perform an in-depth study on how what really works in a variety of real-world settings.We introduce a method to provide vectorial representations of visual classification tasks which can be used to reason about the nature of those tasks and their relations. This is a very fundamental problem in machine learning. Another technique from continual learning is to keep a memory of models trained on previous tasks, and try all of them on the new tasks to see which ones are worthy starting points for learning the new task. Many involve 'task embeddings' that map a given dataset/task to a vector representation.

There are a range of techniques to establish task similarity (see the links below). Meta-learning aims to do this even more efficiently by training over distributions of very similar prior tasks.Ī key question here is "When is a previous task similar enough so that I can effectively transfer information from it"? If the task is similar, the transferred priors can speed up learning dramatically, but it is different, such as prior can make learning the new task much less efficient. Transfer learning and continual learning aim to select and transfer previously learned representations/embeddings so that new tasks can be learned quickly. Several areas of machine learning aim to mimic this ability. For instance, a child first learns how to walk, and then efficiently learns how to run (obviously without starting from scratch). Humans are very efficient learners because we can very efficiently leverage prior experience when learning new tasks. Back to list Project: Task similarity and transferability in machine learning Description

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed